Copyright © Roger K. Moore | contact Site designed using Serif WebPlus X6

PROGRESS & PROSPECTS IN SPEECH TECHNOLOGY

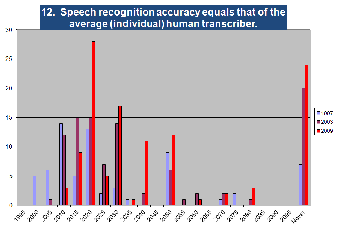

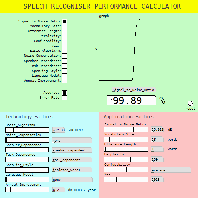

Having been actively involved in speech technology R&D for over four decades, I’m often called upon to deliver my personal perspective on the progress that’s been made in the past and the prospects we’re likely to witness in the years to come. In order to inform these views, I’ve not only conducted a number of surveys of the speech technology R&D community, but I’ve also exploited the ability of my Human Equivalent Noise Ratio (HENR) model to extrapolate automatic speech recogniser performance into the future.

In addition, I maintain a personal timeline of significant events in our field (including some infamous quotations and notable predictions) which it is hoped will provide a useful resource for students and researchers interested in learning how the speech technology field has developed over the years.

2029

Predictions: “implants are used to provide input and output between the human user and the world-wide computer network”, “automated agents are learning on their own”, “the majority of communications involving a human is between a human and a machine”, “growing discussion on what constitutes being human” [Kurzweil, R. (1999). The Age of Spiritual Machines: Phoenix Press.]

2020

Prediction: “we can translate your voice as good as a human” [Xuedong ‘XD’ Huang (2015). Talk at Purdue University.]

2019

Predictions: “most interaction with computing is through gestures and two-way natural-language spoken communication”, “deaf persons read what other people are saying through their lens displays”, “the vast majority of transactions include a simulated person”, “people are beginning to have relationships with automated personalities” [Kurzweil, R. (1999). The Age of Spiritual Machines: Phoenix Press.]

2018

May: Microsoft acquires Semantic Machines to bolster their efforts in 'conversational AI'.

May: Hackers use 'voice squatting' to intercept commands to Alexa and Google Home.

May: Microsoft and Amazon give first showing of Alexa-Cortana integration.

May: Google demonstrates its powerful dialogue system 'Duplex', but raises ethical concerns about deceiving users.

May: Alexander Graham Bell's voice is recovered from an 1885 wax disc.

May: Amazon announces a plan to hire 2,000 employees in Seaport that will focus on developing Alexa.

Apr: Google demonstrates an ability to isolate a single voice a crowd using audio-visual information.

Apr: Microsoft demonstrates their voice assistant's ability to predict pauses and interrupt conversations.

Apr: Linguist Maurice Halle passes away aged 94.

Mar: Google offers text-to-speech technology on its cloud.

Mar: Stephen Hawking, the world's most famous user of speech synthesis, dies.

Mar: Alexa owners report being startled by Alexa's phantom chuckles.

Mar: ELSA raises $3.2M for its AI-powered English pronunciation assistant.

Mar: Roberto Pieraccini leaves Jibo and joins Google in Zurich.

Jan: Apple files patent for the recognition of whispered voice commands.

Jan: Voice assistants dominate the exhibits at CES.

2017

Dec: Google release stunning audio examples from their Tacatron 2 text-to-speech synthesiser.

Dec: A therapist chatbot called Woebot engages in 2 million conversations a week.

Nov: SpeechMatics builds 46 languages in just six weeks, bringing total to 72 unique languages.

Nov: Will.I.Am's Tech Company Launches Omega Voice Assistant.

Nov: Amazon awards $500K first place Alexa Prize to Mari Ostendorf's team at the University of Washington.

Nov: Jibo makes the front cover of Time Magazine as one of the 25 best inventions of 2017.

Nov: Apple reveals that their HomePod smart speaker will be delayed until 2018.

Nov: Sony announces the release of its new Aibo robot.

Nov: SpeechMatics launch a system that can learn and transcribe a new language in days.

Oct: Microsoft discontinues production of Kinect.

Oct: Amazon and Intel release a hardware development kit for Alexa.

Oct: Spoken Wikipedia Corpus released consisting of hundreds of hours of audio time aligned to Wikipedia articles in German, English and Dutch with several hundred speakers.

Oct: Google announces the Home Mini.

Sep: AI pioneer Geoff Hinton says we need to start over.

Sep: Furhat Robotics partners with IBM Watson.

Sep: Lotfi A. Zadeh (father of fuzzy logic) passed away at the age of 97.

Aug: Amazon and Microsoft announce that Alexa and Cortana will be able to talk to each other by the end of 2017.

Aug: The Kaldi speech recognition toolkit gains support for deep learning using TensorFlow.

Aug: The Royal Institution announces that the 2017 Christmas Lecture will be 'The Language of Life' by Prof. Sophie Scott.

Aug: John Hansen becomes ISCA's new President.

Aug: Microsoft's Xuedong Huang claims their speech recognition has reach parity with a human.

Aug: Amazon opens up access to developer tools for adding Alexa to commercial products.

Aug: A hacker succeeds in turning Amazon Echo into a covert microphone.

Jul: Cambridge University releases 'Pydial' - a Python toolkit for statistical dialogue systems.

Jul: The US FBI warns of privacy risks associated with Internet-connected toys.

Jul: Mozilla announces that it is crowdsourcing a massive speech-recognition system.

Jul: Ex speech recognition researcher and ex astronaut Julie Payette is appointed as Canada's Governor General.

Jul: Alibaba launches Tmall Genie as a competitor to Amazon Echo, Google Home and Apple HomePod.

Jul: Baidu and Conexant announce a development kit for voice-enabled AI devices.

Jun: Mari Ostendorf wins the IEEE James L Flanagan Speech and Audio Processing Award.

Jun: Eric Vatikiotis-Bateson, pioneer in audio-visual speech processing, passes away.

Jun: Microsoft’s Dictate uses Cortana’s speech recognition to enable dictation in Office.

Jun: Softbank acquires Boston Dynamics.

Jun: Apple announce 'HomePod' - a Siri-based speaker.

May: After 17 great years at Microsoft, Li Deng moved into a new role of Chief AI Officer with Citadel, one of the most successful investment firms in the world.

May: Baidu announces 'Deep Voice 2: Multi-Speaker Neural Text-to-Speech'.

May: Google announces its speech recognition error rate is now under 5%.

May: MarketWatch values text-to-speech market at $3B by 2022.

May: Google produces a 'do-it-yourself AI' (AIY) Voice HAT board for the Raspberry Pi.

Apr: Amazon announces that Alexa can now whisper, bleep out swear words, and change its pitch.

Apr: Amazon releases Echo Look.

Apr: Lyrebird claims that it can clone anyone's voice using just one minute of audio.

Apr: Amazon opens Alexa deep learning AI for voice powered chatbots.

Apr: Skype announces that it is now able to translate voice and video calls to Japanese.

Mar: Google releases ‘AudioSet’, a corpus of >2M annotated audio clips.

Feb: LDC Director Mark Liberman receives the IEEE James L. Flanagan Speech and Audio Processing Award.

2016

Dec: Hugh Loebner, founder and sponsor of The Loebner Prize, an annual embodiment of the Turing Test, passes away.

Sep: Google's DeepMind claims major milestone in making machines talk like humans using WaveNet, a deep generative model of raw audio waveforms.

Sep: Harman and Baidu form global partnership to develop speech-enabled smart speakers,

Aug: It is reported that dogs understand both the vocabulary and intonation of human speech.

Aug: UC Berkeley launches Center for Human-Compatible Artificial Intelligence

Aug: A study at Stanford finds that voice recognition software finally beats humans at typing.

Aug: Google announces that it is looking for Scots talkers to help learn regional dialects.

Jul: It is reported that an Orangutan learns to mimic human conversation for the first time.

Jul: SoftBank and Honda announce that they want to build a talking car that can empathise with users.

Jul: Google announced the public beta launch of its Cloud Natural Language API that gives developers access to Google-powered sentiment analysis, entity recognition, and syntax analysis.

Jul: According to a report by MarketsandMarkets the Natural Language Processing market is estimated to grow from USD 7.63 Billion in 2016 to USD 16.07 Billion by 2021, at a Compound Annual Growth Rate of 16.1%.

Jun: Cambridge speech recognition technology startup Speechmatics seeks up to 20 new recruits in a rapid scale-up to support Universal Time Alignment – a language-independent forced-alignment service.

Jun: According to a market research report by MarketsandMarkets, the speech recognition market is expected to grow from USD 3.73 Billion in 2015 to USD 9.97 Billion by 2022, at a CAGR of 15.78%, while the voice recognition market is expected to grow from USD 440.3 Million in 2015 to USD 1.99 Billion by 2022, at a CAGR of 23.66%.

Jun: A study by Creative Strategies finds that only 3% of iPhone owners are comfortable to use Siri in public for fear of embarrassment.

Jun: Prisoners’ code word caught by software developed by London firm Intelligent Voice that eavesdrops on calls.

May: IndieGogo startup Jibo announces an SDK that allows developers to create skills using a JavaScript API that provides access to the more computationally-intensive parts of the Jibo platform such as audio and speech technology, visual processing, interaction and movement capabilities.

May: Prof. Roger Moore awarded the Zampolli Prize at the LREC conference.

May: Waverly Labs announces an in-ear device - The Pilot - that will allow the wearer to understand one of several foreign languages through real-time translation.

May: Google makes its parsing tool - Parsey McParseface - open source.

May: The team behind Siri debuts its next-gen AI “Viv” at Disrupt NY 2016.

Apr: Research and Markets report tthat the global speech recognition software market is projected to grow at a CAGR of 16.99% during the period 2016-2020.

Apr: Prof. Sir David Mackay dies.

Apr: Google’s opens up its 80-language Cloud Speech API to developers.

Apr: Study finds the 35 most used words by people playing with dogs.

Apr: Protonet announces a competitor to Amazon’s Echo named Zoe with a mobile interface and 1,500 voice-commands.

Apr: Baidu and Peel announce collaboration in which Baidu's Deep Speech technology will be integrated into Peel's AI-based platform for home control to create voice-enabled smart home products.

Mar: VoiceBox Technologies, unveils it's new embedded automatic speech recognition product for automotive applications.

Mar: Microsoft releases a new version of the Microsoft Translator API which adds real-time speech translation capabilities to the existing text translation API in both the Skype and the Microsoft Translator app.

Mar: Google opens access to its speech recognition API.

Mar: ABC News's attempt to translate the Prime Minister of Canada’s remarks in French automatically generated by speech recognition software produced some wacky results as the software misinterpreted French for English

Mar: Google puts Boston Dynamics up for sale..

Mar: Google announces that it is working on faster, more accurate voice recognition that doesn't need an internet connection.

Mar: Wikipedia Sweden and KTH announces their intention to build and give away crowdsourced speech synthesis code that will plug into any MediaWiki-based site.

Feb: TED announced the IBM Watson AI X Prize of $4.5m to be awarded to an AI that is capable of convincing an audience that it has mastered the art of the 18-minute TED talk at the 2020 TED conference.

Feb: VocalZoom and Cobalt partner to deliver a disruptive end-to-end solution for voice control in the connected car, head-mounted devices and access control applications.

Feb: Pascale Fung, Professor at HKUST, joins the Editorial team of Computer Speech and Language.

Feb: Joe Petro, senior vice president of Nuance healthcare R&D, states that "From an accuracy point of view, we’re on the last mile".

Jan: Report published by Allied Market Research predicts the world Intelligent Virtual Assistant (IVA) market to reach $3.6 Billion by 2020.

Jan: Xuedong Huang, Microsoft's Chief Speech Scientist, is quoted as saying "Speech recognition is really close to reaching parity with humans, in the next three years" but adds "understanding is a different story".

Jan: Marvin Minsky dies.

Jan: Microsoft moves its deep learning CNTK toolkit to GitHub.

Jan: VocalZoom signs an agreement to integrate its technology alongside iFLYTEK.

Jan: Amazon announces that Amazon Echo’s Alexa can able to read Kindle books aloud.

Jan: Baidu releases Warp-CTC - deep learning software used to build their Deep Speech 2 speech recognition system.

Jan: The 15th annual Deloitte Technology, Media & Telecommunications Predictions report the most persuasive developments of 2016 will be in cognitive technologies such as speech recognition, natural language processing and machine learning.

Jan: OnMobile divests its speech technology assets to France’s Voicebox Technologies for €650,000.

Jan: SoundHound Inc. collaborates with NVIDIA to bring deep learning-based natural language understanding to cars.

2015

Dec: Semantic Machines, a startup with artificial intelligence technology and talent from Apple and Google (such as Larry Gillick and Dan Roth), raises $12.3 million in new funding.

Dec: Baidu claims voice recognition is now competitive with humans in some settings.

Dec: Microsoft releases speech and video recognition APIs from ‘Project Oxford’.

Dec: Li Deng, partner research manager in Microsoft's Redmond, Wash. lab, receives the 2015 IEEE Signal Processing Society Technical Achievement Award for outstanding contributions to deep learning and to automatic speech recognition.

Dec: Xuedong Huang (s chief scientist at Microsoft) says we're less than five years away from computers understanding us perfectly.

Nov: Microsoft says voice recognition will replace browser search.

Nov: According to Global Voice, the voice recognition market for smartphones to grow at a CAGR of 10.3% over the period 2014-2019.

Nov: Google launches 'TensorFlow' open-source machine learning platform.

Nov: According to Transparency Market Research, the )Intelligent Virtual Assistant Market is to reach US$5.1 billion by 2022.

Oct: Andrea Paoloni (FUB, Italy) passes away.

Oct: Chrome no longer supports “OK Google” voice command.

Oct: Roy Patterson and John Ohala are named as recipients of the ASA Silver Medal.

Oct: The Intelligent Virtual Assistant market is forecast to grow at a CAGR of 31.8% from 2015 to 2022.

Oct: Apple acquires VocalIQ.

Sep: Speech recognition company Uniphore opens Bengaluru office and plans to hire 100 people in 15 months.

Sep: Prof Hiroya Fujisaki receives the ISCA Special Service Medal during the opening ceremony at Interspeech2015 in Dresden

Aug: Jim Flanagan, former head of the Acoustics Research Department at AT&T Bell Labs and pioneer of speech processing, passes away on the day before his 90th birthday.

Aug: Facebook launches its new virtual assistant - M that is powered by AI as well as a band of Facebook employees, dubbed M trainers, who will make sure that every request is answered.

Aug: Tractica claims that speech recognition will achieve 82% market penetration in mobile devices by 2020.

Aug: Intel releases Stephen Hawking's speech synthesis software open-source as part of their Assistive Context-Aware Toolkit (ACAT) system.

Aug: Nuance announces Dragon Anywhere, a cloud-connected dictation service that works in tandem with updated versions for the Mac and PC..

Aug: US teenager who was pinned under a truck uses his bottom to activate Siri, which called emergency services.

Aug: Interactions announce the expansion of its speech and natural language technology R&D with the establishment of new labs located in Murray Hill and Manhattan.

Aug: PNAS paper claims 3D-printed device can help computers solve the cocktail-party problem.

Aug: MIT claims to have found a “language universal” that ties all languages together.

Jul: Google said it has reduced transcription errors by 49% with the latest improvements to Google Voice and Project Fii using long short-term memory deep recurrent neural networks.

Jul: Li Deng from Microsoft Research gives an interview in which he says that "all the low-hanging fruit [in Deep Learning] has been taken".

Jul: Elon Musk spends $10 million on new projects to control AI.

Jun: Amazon's Echo can be purchased by anybody for at least $149.

Jun: Facebook opens up a new lab in Paris to tackle AI, image and speech processing.

Jun: Roy Patterson is awarded the Silver Medal of the Acoustical Society of America.

Jun: According to a report from Tractica, worldwide licenses for voice and speech recognition software will increase from $49.0M in 2015 to $565.8M by 2024 and the voice-based biometric market will grow from $249M to $5.1B.

Jun: Apple claims that Siri's speech recognition is more accurate than Google's.

Jun: Ray Kurzweil predicts that human brains will merge with artificial intelligence in the 2030s.

Jun: The Welsh Government announes a £1M investment in speech technology.

Jun: SoundHound introduces a super-fast speech recognition plus natural language processing system called 'Hound'.

May: Google and IBM announce that the speech recognition error rate on conversational speech is now at 8%.

May: Disney announced “Visually Consistent Acoustic Redubbing” which detects facial expressions and lip movements in a video and maps them to different phone sequences synchronized with the video.

May: Adam Kilgarriff, linguist and person behind SketchEngine, dies of cancer.

May: Microsoft makes its Skype Translator preview available to anyone who wants to download it.

May: U.S. prosecutors request to use evidence taken from biometric voice recognition software in a trial of three reputed members of a terrorist organization - the first time in a federal trial in the U.S.

May: Anne Cutler is awarded an FRS.

Apr: Fujitsu introduces "LiveTalk," a participatory communications tool that uses speech-recognition technology to enable people with normal hearing and people with hearing disabilities to share information in real time.

Apr: Interactions (the customer support company that acquired AT&T’s ‘Watson’ speech recognition platform) raises $9.5M.

Mar: John McDonough (CMU) dies of an unexpectd heart attack.

Mar: Google Chrome adds automatic speech recognition.

Mar: Sirius open-source virtual assistant gets Google funding.

Mar: Rumours in the technical press that Microsoft is looking at porting Cortana to Apple iPhone and Android smartphones.

Mar: Report declares that the global speech analytics market is expected to reach $1.33 billion by 2019.

Feb: DARPA announces its new 'Communicating with Computers (CwC) programme.

Feb: In an interview for IEEE Spectrum, Yan LeCun (Facebook's director of AI) states that "The next frontier for Deep Learning is natural language understanding".

Feb: Controversy erupts after Mattel announces a partnership with US start-up ToyTalk to develop 'Hello Barbie' (dubbed 'Spy Barbie' by the media), which will have two-way conversations with children.

Feb: Japanese 'conversational' robot - "Kirobo" - returns to Earth after 18 months in the International Space Station.

Feb: Honeywell tests voice-recognition on flight decks.

Feb: UK company Therapy Box launches an app designed to substitute standard text-to-speech synthesis with a synthesizer based on the patient’s own voice for amyotrophic lateral sclerosis (ALS) patients.

Feb: Steve Young receives the IEEE James L. Flanagan Speech and Audio Processing Award for his pioneering work in speech technology.

Feb: Furore when Samsung warns customers to avoid discussing personal information in front of their smart TV.

Feb: European Society for Cognitive Systems formed (to replace EU-funded EUCognition network).

Feb: Art Blokland ex-rearcher at CSTR working at Toshiba dies from cancer.

Jan: Robotics startup Jibo raises $25M and hires Steve Chambers (from Nuance) as its CEO.

Jan: Facebook tests automatic speech recognition for voice clips in its Messenger app.

Jan: Google Translate smartphone app now has the ability to translate speech and signs in real time.

Jan: TechNavio forecasts that the global voice recognition market will grow at 9.4% per year from 2015 to 2019.

Jan: UK-based Therapy Box releases an app that allows people with limited vocal ability to use a speech synthesiser based on their own voice.

Jan: Rumour spreads that Nuance could be acquired by Baidu.

Jan: Facebook acquires the speech-recognition start-up Wit.ai.

2014

Dec: Andrew Ng (Chief Scientist of Baidu Research) announces 'Deep Speech', a new method of recognising speech that beats those offered by Google and Apple on standard benchmarks.

Dec: Microsoft goes live with Skype Translator.

Dec: Interactions buys AT&T Watson speech recognition and natural language understanding technology.

Dec: Stephen Hawking warns that the development of full artificial intelligence could spell the end of the human race.

Nov: Jim & Janet Baker lose their appeal against Goldman Sachs over L&H's buyout of Dragon Systems.

Nov: Amazon unveiled a voice-controlled interactive speaker called Echo that lets people stream music and search the Internet.

Nov: Microsoft offers up Skype Translator feature for preview.

Oct: Tesla CEO Elon Musk causes controversy by stating that "we are creating the demon with AI".

Oct: Google release a video on The Science of Talking with Computers.

Sep: Fergus McInnes, an established speech technology researcher at CSTR in Edinburgh, goes missing in Switzerland while on a journey to IDIAP in Martigny.

Sep: McDonald's UK site integrates Google speech recognition to help customers share memories for its 40th Anniversary.

Aug: Geoff Leech, pioneer of English corpus linguistics, dies.

Aug: Gartner publishes its 'hype cycle' for the Internet of things that shows automatic speech recognition through the other side and making money.

Jul: MIT's Cynthia Breazeal and colleagues unveil Jibo, a social robot for the home available for pre-order on Indiegogo for US 499 and to ship in 2015.

Jul: Transparency Market Research announce that the Intelligent Virtual Assistant market is expected to reach USD 2,126.4 Million in 2019.

Jun: Nexidia announce neural phonetic speech analytics.

Jun: Amadeus Capital Partners leads a £750K seed funding round for Cambridge University’s latest spinout VocalIQ.

Jun: A rumour circulates that Samsung may be interested in purchasing Nuance.

Jun: A furore breaks out after Prof. Kevin Warwick announces that a chatbot (based on a 13-year-old Russian boy called Eugene Goostman) passed the Turing Test at an event hosted by the Royal Society.

Apr: Apple confirmed that it had acquired Novauris Technologies sometime during 2013.

Mar: Thad Starner at the Georgia Institute of Technology demonstrates a prototype dolphin translator called Cetacean Hearing and Telemetry (CHAT).

Mar: Google's Larry Page Admits "Speech Recognition is Not Very Good".

Mar: A report by MarketsandMarkets predicts that the speech analytics market will be worth $1.33 Billion by 2019.

Feb: Professor Graeme Clark is among 76 staff made redundant or did not have their contracts renewed at Australia’s National Information Communications Technology Centre.

Jan: Samsung announces smart home system with voice control.

Jan: Japanese Kirobo becomes first humanoid robot with speech capability in space.

2013

Dec: Google buys Boston Dynamics.

Nov: Analyst firm TechNavio forecasts that the global voice recognition market will grow at a compounded annual growth rate of 22% over the period 2012-2016.

Nov: Survey claims 46% of people think that Apple oversold Siri’s voice capabilities.

Nov: Clifford Nass (author of “Wired for Speech”) dies.

Sep: Bruce Lowerre (producer of the ground-breaking HARPY continuous speech recognition system in 1976) is killed after crashing his home-built seaplane into Lake Okeechobee in Florida.

Sep: David Pallett (pioneer of speech recogniser evaluation methodologies) dies.

Aug: Alex Acero leaves Microsoft Research to take up a position of Senior Director at Apple.

Aug: Ken Stevens (speech research pioneer at MIT) dies.

Aug: Amazon advertises several positions in speech technology in Boston, Seattle, Cupertino, Gdynia, Aachen and Cambridge.

Aug: Facebook acquires Pittsburgh-based Mobile Technologies, the firm behind app Jibbigo, which translates recorded or written text in over 25 languages and reads it aloud in different languages.

Aug: JAXA rocket carrying talking KIROBO robot departs for the International Space Station.

Aug: Alain Marchal (French expert on speech production) dies.

Jul: Lucy Hawking presents a BBC Radio 4 programme on the history of speech synthesis entitled "Klatt's Last Tapes".

Jul: Beyond Verbal obtains $1M for emotion-decoding voice recognition software.

Jul: Study suggests Neanderthals shared speech and language with modern humans.

Jul: Talking train window adverts being tested by Sky Deutschland.

Jun: Translate Your World announced the release of TYWI Web Interpreter ("tie-wee"), the real-time voice translation software which offers simultaneous interpretation on the Web plus automated interpretation in up to 78 languages.

Jun: Michael Tjalve from the speech technology group at Microsoft explained that Microsoft will be using deep neural networks for speech recognition that will allow MS phones to respond at twice the speed as before.

Jun: AAA publish study suggesting that voice-activated technology creates a safety risk for drivers by taking a driver’s mind, if not eyes, off the road.

May: Nuance acquires Tweddle Connect for $80M as part of its push to bring its speech recognition capabilities into cars.

Apr: Amazon acquires voice recognition app Evi.

Mar: A £1.9 million ($2.9 million) project to introduce a dictation system into five hospitals in the West Yorkshire city of Leeds runs into serious problems due to patient complaints about the speech recognition system.

Mar: Geoffrey Hinton joins Google.

Jan: Jim & Janet Baker lose in Golman Sachs Dragon/L&H case.

Jan: Amazon acquires text-to-speech firm Ivona Software.

Jan: IBM has banned Siri because “The company worries that the spoken queries might be stored somewhere.”

2012

Dec: Ray Kurzweil joins Google as Director of Engineering.

Nov: Microsoft give a public demonstration of real-time English to Mandarin speech translation.

Nov: It is revealed that Birmingham Council's £11m automated phone system can't understand Brummie accents.

Oct: Google unveiled its artificial intelligence software that could recognize faces of cats, people and other things by training on YouTube videos.

Aug: Nuance announce their new mobile customer service app, dubbed Nina as a competitor to Apples' Siri.

Jul: Nuance announce NaturallySpeaking 12 and claim it to be 20% more accurate than its predecessor.

Jul: Kate Knill steps down as Assistant Managing Director, Cambridge Research Lab at Toshiba Research Europe Limited to become a Senior Research Associate at the University of Cambridge.

Jul: AT&T released its Watson speech API to developers.

Jun: A report released by Piper Jaffray Cos. (Minneapolis-based investment bank) concludes that Apple's Siri doesn’t work all that well. Of 1,600 common searches, the speech technology accurately resolved the request 62% of the time on a loud street and 68% in a quieter setting.

Jun: Nuance opens its International Headquarters in Dublin.

Mar: GIA releases a report in which the world Speech Technology market is forecast to reach US$31.3 billion by the year 2017.

Feb: According to a survey carried out by Call Centre Helper, only 18% of contact centres that fronted their calls with an Interactive Voice Response (IVR) system used it in combination with speech recognition.

Feb: Novauris Technologies Ltd announce a partnership with Existor Ltd. to create a new generation of human-machine interaction.

Feb: Gesture-to-voice-synthesizer technology at the University of British Columbia makes it possible for a person to speak or sing just using their hands.

Feb: Android release Utter! as a response to Apple's Siri.

Jan: Roberto Pieraccini leaves SpeechCycle and is appointed Director of ICSI.

Jan: Novauris Technologies announce an embedded automatic speech recognition (ASR) strategic partnership with Panasonic System Networks in Japan, including the joint development of NovaLite™, a software automatic speech recognizer to be offered to consumer electronics manufacturers who want to voice-enable their products.

2011

Dec: Nuance Communications announce plans to acquire rival speech company Vlingo.

Nov: Winscribe, the world’s largest provider of digital dictation software announce that it has acquired 100% of SRC, UK’s leading healthcare dictation and speech recognition provider.

Nov: Jon Briggs, a former technology journalist turned experienced voiceover artist, reveals that he voices the British version of Siri.

Oct: Aurix, a provider of speech recognition and analytics solutions for the contact centre market, becomes a wholly owned subsidiary of Avaya, a global provider of business communications and collaboration systems and services.

Oct: Sir Terry Pratchett, author of ‘Discworld’, reveals that he has become a devotee of voice recognition technology since losing his ability to type effectively due to Alzheimer’s disease.

Oct: Apple release 'Siri', a voice-driven virtual personal assistant, as a major feature in the new iPhone 4S.

Oct: According to a study published in the American Journal of Roentgenology, breast-imaging reports prepared using a speech-recognition system are eight times more likely than conventional dictation transcription reports to contain major errors.

Aug: Microsoft Research claims a breakthrough in speech recognition using Deep Neural Networks (DNNs). The new approach was tested using Switchboard and achieved a word error rate of 18.5% (33% improvement over traditional approaches).

Aug: Nuance acquires Loquendo for $75M.

Jul: SRI International announce a $13 million contract from the Defense Advanced Research Projects Agency's “RATS” (Robust Automatic Transcription of Speech) program to work on noisy and highly degraded speech.

Jul: A coalition of three British Universities - the Universities of Cambridge, Sheffield, and Edinburgh - announces $10 million of funding from Britain's Engineering and Physical Sciences Research Council to work on 'natural speech technology'. The project's objectives are speech software that can adapt to new scenarios and speaking styles, and seamlessly adapt to new situations and contexts almost instantaneously; models and algorithms smart enough to eavesdrop on a meeting, and to be able to sift who spoke what, when, and how. It is also intended to create technologies such as speech synthesizers (for sufferers of stroke or neurodegenerative diseases) that learn from data and that are capable of generating the full expressive diversity of natural speech.

Jun: Google brings its spoken word search from its Android smartphone to home computers.

Jun: Nuance acquires Zurich-based embedded speech software firm SVOX.

Jun: Russia’s biggest retail bank is testing an A.T.M. with a built-in lie detector intended to prevent consumer credit fraud. The voice-analysis system was developed by the Speech Technology Center.

May: Julia Hirschberg receives the IEEE 2011 Jim Flanagan Speech and Audio Processing award.

Feb: Around 90 full-time medical secretary jobs (out of 370) at Leeds hospitals are to be axed over the next two years as a £1.9m speech recognition system is brought in.

Feb: Google rolls out the Google Translate App for the iOS platform. Over 50 languages are supported, 15 out of which offers voice-input functionality and 23 have speech synthesizer facilities.

Jan: According to Global Industry Analysts, Inc., the global speech technology market is to reach US$20.9 Billion by 2015.

2010

Dec: Ilse Lehiste dies aged 88.

Dec: Google aquires Phonetic Arts, Paul Taylor’s Cambridge-based company formed in 2006 to provide high-quality TTS to the games industry.

Nov: Nuance shares jump 12.15% at the start of the trading day to hit a new two-year high of $19.19 after Apple Inc’s Steve Wozniak said in a tech-blog video clip that the company recently purchased it.

Oct: Nuance release ‘SpeechTrans’ for the iPhone, a speech-to-speech translator app.

Sep: In Belgium’s biggest case of corporate fraud, Lernout & Hauspie Speech Products NV’s co-founders Jo Lernout and Pol Hauspie and former top executives Nico Willaert and Gaston Bastiaens were sentenced to three years in prison with an additional two years of probation for artificially inflating revenue.

Sep: Fred Jelinek dies of a heart attack in his faculty office at the Johns Hopkins University's Homewood campus aged 77.

Aug: Aurix launches its phonetic speech search and analytics solution, gopher-it 1.1 at Speechtek 2010 in New York.

Jul: Dragon 11 is released with a claimed 15% improvement in accuracy compared to Dragon 10.

Jul: UK version of Dragon dictation software available for the iPhone.

Apr: Researchers in Israel and America announce a way of measuring speech patterns, inaudible to the human ear, to test if apparently healthy people have Parkinson’s disease.

Apr: Google releases 'translate for animals', an Android application to bridge the language gap between animals and humans. Using speech recognition and translation engines, the app analyzes "the neural biological acoustics and compare it with the millions of animal sounds in their animal linguistics database. Once this processing is completed, the animal sounds are translated into plain English." (April 1st!)

Mar: Francisco Lacerda, Professor of Phonetics at Stockholm University, questioned the effectiveness of the voice risk analysis (VRA) system that is being trialled by the UK Government as part of a crackdown on welfare fraud. Lacerda's academic paper was withdrawn by the publisher after legal action was taken by the company behind the technology.

Mar: Google announces that it has moved its automatic speech-recognition and closed-captioning technology out of beta and have now made it available to the YouTube community.

Mar: A study by the University of Edinburgh shows that speech recognition systems find it harder to understand men than women, and that the first word of a sentence is often misunderstood because the machine cannot put the word in context or because the speaker inhales just before talking. The study also found that the reason men tend to be misunderstood more than women is partly because they umm and err more frequently.

Feb: The U.S. Defense Advanced Research Projects Agency (DARPA) launches a program called Robust Automatic Transcription of Speech (RATS) to develop speech transcription, translation, and, speech signal processing technologies that function effectively in noisy places to support intelligence gathering.

Feb: Inventor Douglas Hines (from the firm TrueCompanion) introduces 'Roxxxy', a life-sized robot on the market for $7,000 embedded with advanced technologies such as voice-recognition and speech-synthesis software to give it a lifelike presence.

Jan: It is revealed that Google scrubs all speech recognition text clean of profanity and dirty words on the the Android 2.1 OS Nexus One speech-to-text recognition service.

Jan: Nuance and Ford unveil enhancements to the speech capabilities powering the next-generation of Ford SYNC(TM), the Ford-exclusive factory-installed, in-car communications and entertainment system.

2009

Dec: Nuance Communications announces that it has acquired SpinVox for $102.5 Million.

Dec: "Google 411 is a free application, but it costs $100M to make. However, the speech data generated by Google 411 has given rise to a $2B market. Hence it’s possible to argue that ASR lost and made money." - Jont Allen, IEEE ASRU Workshop, Moreno, Italy

Dec: Nuance announces that its Dragon Dictation App is available for free for a limited time in Apple's App Store.

Nov: Google says it is introducing automatic, machine-generated captions for videos on its YouTube site.

Nov: IBM’s Almaden Research Center announces at the Supercomputing Conference (SC09) in Portland that they have created the largest brain simulation to date on a supercomputer - 1 billion neurons connected by 10 trillion individual synapses.

Oct: “Voice is the new touch ... It’s the natural evolution from keyboards and touch screens. Bill Gates articulated this vision a decade ago, and we’re seeing it happen today.” - Zig Serafin, general manager of the Speech at Microsoft group

Sep: Kai-Fu Lee, former Microsoft VP and founding director of the company’s research lab in Beijing, reports that he was quitting as head of Google’s China arm and announces that he has raised $115 million to create a new incubator for high-tech startups in China.

Aug: Dimension Data announces findings from its 2009 Alignment Index for Speech Self-Service report. The worldwide study reveals that 40% of respondents - up from 36% in 2008 - avoid using speech systems "whenever possible", 42% said they use the Internet instead of the phone, and only 25% reported they would be happy to use a speech-based customer service option again.

Aug: National Institute for Truth Verification (NITV) founder Charles Humble is awarded a second patent for his CVSA II (Computer Voice Stress Analyzer).

Aug: SpinVox demonstrates its technology to journalists to counter claims that its reliance on call centres would hamper its ability to grow.

Jul: SpinVox reveals some of the details about the technological breakthroughs it has achieved in the development of its Voice Message Conversion System (VMCS™). It contains over two billion words and phrases derived from the equivalent of 72 years of audio training – making it the world’s largest corpus of spoken language.

Jul: A BBC investigation suggests that the majority of messages sent to Spinvox's Voice Message Conversion System have been transcribed by call centre staff in South Africa and the Philippines. The firm issued a statement calling the claims "incorrect" and "inaccurate", "speech algorithms do not learn without human intervention and all speech systems require humans for learning - Spinvox does this in real-time", "The actual proportion of messages automatically converted is highly confidential and sensitive data". The company said that, when necessary, parts of messages can be sent to a 'conversion expert'. A Facebook group created by staff at an Egyptian call centre, which used to work for Spinvox, includes a picture of one transcribed message containing what appears to be sensitive commercial information. The firm issued a statement saying that the allegations of call centres handling a majority of calls were "incorrect". "We have always been absolutely clear in our communications that humans form an important component of our learning system," and that the human-mediated training of its system was accomplished "in total security through anonymisation, encryption and randomisation".

Feb: Copyright holders complain about the speech synthesis capability added to the second-generation Kindle ebook reader from Amazon.

Jan: Svox acquires all speech technology-related intellectual property of Siemens including its SpeechAdvance speech recognition product suite and more than 60 patent families.

Jan: Nuance and IBM enter into a licensing and technical services agreement.

Predictions: “The majority of text is created using continuous speech recognition”, “ubiquitous language user interfaces”, “Most routine business transactions take place between a human and an animated visual presence that looks like a human face”, “Pocket-sized reading machines for the visually impaired”, “Listening machines for the deaf”, “Translating telephones commonly used for many language pairs” [Kurzweil, R. (1999). The Age of Spiritual Machines: Phoenix Press.]

2008

Sep: Philips' speech recognition arm, based in Austria, sells its speech-recognition division to Nuance Communications Inc. for €65 million (US$95 million). It had sales of €25 million (US$35 million) in 2007, and 170 employees.

Aug: Nuance releases Dragon NaturallySpeaking 10 which is said to be nearly twice as fast and 20% more accurate than than the previous version.

Jul: BBN Technologies Chief Scientist John Makhoul is the recipient of the IEEE 2009 James L. Flanagan Speech & Audio Processing award 'for pioneering contributions to speech modeling'.

Jul: Nuance release the results of an In-Car Distraction Study, which measures the positive impact to safety and response times when people use speech recognition to control their in-car systems.

Jun: It is reported that the Asimo humanoid robot can understand three humans shouting at once in a rock-paper-scissors contest. Apparently it uses an array of eight microphones to work out where each voice is coming from.

May: Indesit introduces Sophius, a prototype voice-controlled oven that uses speech technologies from Loquendo and Amuser. The first fully voice-controlled domestic appliance, Sophius allows the user to set the cooking time and temperature or the preset program desired. It is able to understand naturally spoken commands such as “Cook the pizza at 180 degrees for 20 minutes”.

Mar: SpinVox announces its appointment of 'a world-renowned academic' (Tony Robinson) to lead its new Advanced Speech Group (ASG) centre in Cambridge.

Mar: The global speech technology market is expected to reach US$7.8 billion by 2010. Sales from automatic speech recognition products are expected to register about US$7.5 billion by 2010. Business portal self-service and call center applications are the biggest sectors for growth and sales. Major factors include the power and speed of the standard PC and enhanced algorithms. Major players in the marketplace include Acapela Group, Active Voice, AT&T Labs, BeVocal, Edify Corporation, Fonix Corp, Genesta, IBM Voice Systems, Intel Corporation, Intervoice, iVoice, Lucent Speech Solutions, Microsoft, Nortel Networks Corporation, Novauris, Nuance Communications, Philips Speech Processing, ScanSoft, Sensory, Speech Interface Group of MIT Media Lab, Telephonetics Interactive Voice Systems, Telisma, Vocollect, VoiceGenie Technologies, VoiceVantage, Voxeo Corporation, Voxify, Voxware, and Wizzard Software.

Feb: Bill Gates tells 1,200 students and faculty members at Carnegie Mellon University that Microsoft is pushing touchscreen and speech technology to replace keyboards - "In five years, we expect more Internet searches to be done through speech than through typing".

Feb: Ray Kurzweil predicts full simulations of human intelligence by 2029 in his keynote talk at the 2008 Game Developers conference.

Feb: Ambient Corporation demonstrates the world's first live voiceless phone call at the Texas Instruments Developer Conference using the Audeo ultra-low power MSP430 microcontroller wireless sensor worn on the neck to capture neurological activity that the brain sends to the vocal cords.

Jan: Nearly flawless speech recognition enabled by more powerful mobile chips is placed 7th in a list of 13 future mobile technologies that are predicted to change the way people work.

2007

Jul: PC-World publishes 'The 21 biggest tech flops' which includes speech recognition in the runner-up category. The other flops were: Apple Newton, DAT tape, DIVX, dot-bombs, E-books, PCjr, internet currency, Iridium, Microsoft Bob, Net PC, the paperless office, push technology, smart appliances, virtual reality, Apple Lisa, Dreamcast, NeXT, OS/2, Qube and WebTV.

Jun: Johns Hopkins University is awarded a long-term, multimillion dollar contract ($48.4m to 2015) to establish and operate a Human Language Technology Center of Excellence focusing on advanced technology for automatically analyzing a wide range of speech, text and document image data in multiple languages. Gary W. Strong is appointed as executive director and James K. Baker as director of research.

Jun: Nuance purchases Tegic Communications Inc. which supplies the popular T9 predictive-text software for cellphones, in a deal worth $265 million.

May: Belgium's biggest fraud trial opens against twenty-one people linked to the Lernout & Hauspie speech-recognition company. Charges include allegations that unorthodox accounting practices inflated turnover and lured investors into participating in a scam venture posing as a lofty, groundbreaking company. "We will show that there was no fraud" - Jo Lernout. 13,368 shareholders joined with Deminor, a company assisting minority shareholders and investors, to seek redress and demand up to US$300 million (euro220 million) in compensation. The courthouse in Ghent was deemed too small to deal with the trial.

May: Nuance acquires VoiceSignal Technologies, Inc.

Apr: Nuance announces Nuance® Recognizer v9.

Apr: Google Labs showcases its speech-recognition technology with a voice search service that delivers local business listings to fixed-line and mobile users. Users dial a toll-free number from any phone to search for businesses by category or name.

Apr: Nuance marks the 10th anniversary of its speech recognition software Dragon NaturallySpeaking. “Speech recognition has come a long way in a relatively short period of time, and we are proud that Dragon NaturallySpeaking has led in accuracy and innovations every step of the way” - Robert Weideman.

Apr: Karen Sparck Jones, emeritus professor of Computers and Information at the University of Cambridge, dies of cancer.

Apr: PC Advisor magazine lists speech recognition among the 20 worst technologies of all time: “Over the years, Bill Gates (among others) has repeatedly predicted that speech recognition will be a major form of input, but it hasn't happened yet. Part of the problem is that, even with 99 percent accuracy, there are still a lot of errors to correct. Plus, many of us use computers in public places where speech recognition would be clumsy, embarrassing or downright rude. Still, the technology continues to improve, and it is being used in niche markets such as in medicine. Maybe someday it'll make it to the rest of us.”

Mar: Microsoft buys privately held speech recognition maker Tellme Networks for $800 million.

Feb: IBM demonstrates everyday applications at its Speech Technology Innovation Conference including in-car products using voice recognition to improve hands-free performance of navigation and entertainment features and call center handling, MASTOR, a speech-to-text-to-speech translator which it deployed in Iraq last year, and TALES, which translates and transcribes foreign-language TV broadcasts and Web sites.

Feb: Microsoft confirms that Windows Vista's speech recognition feature can be hijacked to delete protected files or folders.

Jan: Microsoft launch their new Vista OS that incorporates speech recognition and synthesis.

Jan: IBM releases 'Five innovations that will change the way we live over the next five years' (i.e. 2007-2012) including real-time speech translation.

2006

Jul: At Microsoft's annual Financial Analyst Meeting, Vista product manager Shanen Boettcher gives a disastrous demonstration of the speech recognition technology built into upcoming Windows Vista software. Several tries at making the computer understand “Dear Mom” was recognised as “Dear Aunt, let's set so double the killer delete select all.” Attempts to correct or undo or delete the error only deepened the mess.

Mar: Leigh Lisker dies of pneumonia aged 87.

Mar: Podzinger, owned by BBN Technologies, launches a new search engine that uses speech-recognition technology to search the Web for online video products created by professional television networks or by amateur enthusiasts.

Mar: Nuance issues a court order for Voice Signal Technologies to provide source code to them in regards to their trade secret misappropriation lawsuit. Nuance claim that Voice Signal used and 'misappropriated' information and trade secrets relating to their speech recognition technologies which have given them market advantage in the embedded speech products market. Nuance is also claiming that Voice Signal infringes on a patent held by Nuance that includes voice-activated dialing for mobile phones.

Feb: Nuance acquire Dictaphone Corporation.

Jan: Peter Ladefoged dies age 80 of natural causes in London while traveling to/from India for fieldwork.

Jan: IBM announce Embedded ViaVoice 4.4 that can allow automobile drivers and handheld device users to speak commands naturally without memorizing specific predetermined commands.

Jan: IEEE Transactions on 'Speech and Audio Processing' changes its title to 'IEEE Transactions on Audio, Speech, and Language Processing'.

Jan: NEC Corporation announce that it has succeeded in the development of the world's first, automatic, Japanese-Chinese speech translation software, capable of real-time, speech-to-speech translation of travel-related Chinese and Japanese conversation on a PDA.

2005

Nov: Candy Kamm (AT&T) dies.

Oct: Nuance sues Yahoo for allegedly stealing trade secrets by hiring away 13 key engineers (including Larry Heck) who had nearly completed its interactive speech technology project.

Sep: "You have everything to learn from us, and we have nothing to learn from you" - Anne Cutler (INTERSPEECH Lisbon panel discussion on 'bridging the gap between HSR & ASR').

Aug: Spoken Translation, Inc. (STI) showcase Converser™, the world's first system for interactive speech-to-speech and text-to-speech translation at SpeechTek 2005. Converser for Healthcare allows people who don't speak the same language to hold wide-ranging health-related conversations in real time, without a human interpreter.

Jul: Tell-Eureka appoints Roberto Pieraccini (IBM) as chief technology officer.

Jul: Microsoft files lawsuits against Google and Kai-Fu Lee in respect of confidentiality and non-competition agreements.

Jul: Google hires Kai-Fu Lee (Microsoft) as Vice President Engineering and President of Google China.

Jun: The remaining 12 scientists at Rhetorical Systems in Scotland are made made redundant by ScanSoft.

May: ScanSoft and Nuance Communications sign an agreement whereby ScanSoft acquires all of the outstanding common stock of Nuance, merging the two organizations into a single company. The transaction is valued at approximately $221 million.

Apr: Samsung announces the SPH-P207 cell phone with dictation software from VoiceSignal.

Mar: According to call centre software firm Genesys Technologies, men are keener than women on using call centre speech recognition systems and the Internet to deal with businesses.

Mar: 20/20 Speech Ltd. formally changes its name to Aurix Ltd.

Mar: Fred Jelinek is awarded the IEEE Jim Flanagan Speech & Audio Processing Award for "fundamental contributions towards the statistical modeling of speech and language" at ICASSP in Philadelphia.

Mar: Joe Olive announces a new DARPA programme called GALE - 'Global Autonomous Language Exploitation' - "to develop and apply computer software technologies to absorb, analyze and interpret huge volumes of speech and text in multiple languages. Automatic processing engines will convert and distil the data, delivering pertinent, consolidated information in easy-to-understand forms to military personnel and monolingual English-speaking analysts in response to direct or implicit requests".

Mar: The DARPA EARS programme is cancelled half way through its 5-year term.

Feb: Nuance and Acapela Group team to offer text-to-speech software for European customers in 23 languages.

Feb: "There are some things that we are always thinking about. For example, when will speech recognition be good enough for everybody to use that? And we have made a lot more progress this year on that. I think we will surprise people a bit on how well we will do on our speech recognition." - Bill Gates

Feb: James. L. Flanagan, former director of the Information Principles Research Laboratory at Bell Laboratories in Murray Hill, N.J., is named as recipient of the 2005 IEEE Medal of Honor.

Jan: Ben Gold, pioneer of speech signal processing, dies.

Jan: ScanSoft closes the acquisition of ART (Advanced Recognition Technologies).

2004

Dec: Charles Wayne retires from DARPA and is replaced by Joe Olive who announces that the consequence was that there was no guarantee that the EARS programme would continue beyond Feb 05.

Nov: ScanSoft and Microsoft announce an expanded worldwide agreement that authorizes Microsoft to deploy ScanSoft text-to-speech (TTS) technology in Microsoft(R) server application product lines, beginning with Microsoft Speech Server 2004.

Nov: ScanSoft acquires Phonetic Systems, ART and Rhetorical. The Phonetic Systems consideration comprises $35 million in cash. The ART transaction is valued at approximately $21.5 million. The Rhetorical consideration is approximately $6.7 million.

Nov: ScanSoft unveil Dragon NaturallySpeaking 8, claimed to be 25 percent more accurate than the previous version. Other additions include text and graphics shortcuts that let users call up boilerplate templates with a few choice words; more flexible formatting of dates, measurements, and acronyms; and support for the Palm Tungsten line of handhelds.

Nov: Nino Varile retires as Head of Unit at the EU in Luxembourg, and is replaced by Geoffrey Bernard Smith.

Oct: Carphone Warehouse terminate takeover talks with speech recognition software specialist Eckoh Technologies.

Oct: Nuance celebrates ten years in the speech industry.

Oct: The Speech Industry Council (SIC) is launched in Australia to promote professional standards and encourage local supply and development for the speech recognition industry in Australia and New Zealand.

Oct: A study in the Journal of Rehabilitation Research & Development shows that average satisfaction with ASR was greater than neutral, but not overwhelmingly positive. Results suggest that a typical user may enter about 16 wpm with ASR, but speeds as low as 3 wpm and as high as 32 wpm were observed. Users who manually type faster than 15 wpm may be less likely to enjoy a speed enhancement through the use of ASR.

Oct: Eckoh Technologies share price shoots up 23 per cent after an announcement that it was in discussions with Carphone Warehouse about a possible takeover.

Oct: Telematics Research Group predicts that 30 million passenger cars - about half the global units - will have voice recognition capability by 2010.

Oct: Jan van Santen is appointed head of Oregon Health & Science University's OGI School of Science & Engineering. OGI's computer science and engineering faculty will now refocuses its program on health-related applications such areas as speech recognition, adaptive systems, biosensor development, human-computer communication and computational neurology.

Oct: Lernout & Hauspie's auditors agreed to pay $115 million to settle lawsuits by wiped-out investors.

Oct: NEC announces the development of a handheld device that converts spoken Japanese to English and vice versa.

Oct: Paul Taylor takes up a one-year Royal Society Fellowship at CUED on sabbatical from Rhetorical Systems.

Sep: Jim Flanagan retires from his position as director of the Rutgers Center for Advanced Information Processing (CAIP) and as Vice President for Research at Rutgers University.

Sep: Rex - the first fully disposable talking pill bottle - is being supplied by MedivoxRx Technologiesto the US Army in Afghanistan. It employs text-to-speech technology from Wizzard to audibly tell patients what medication is in a bottle, as well as the appropriate dosage and frequency, refill instructions and warnings. The bottle is completely self-contained and requires no readers, scanners or playback accessories for patients to use it. It is being used to deliver accurate, understandable medical instructions to locals, in their own language and dialect, in an area where people typically are illiterate and rarely speak English.

Sep: BT wins a contract to provide automated speech recognition technology for the UK's National Rail Enquiries telephone service. Apart from allowing callers access to train times, the new automated voice recognition service will be used to handle the overflow during times when there are severe train disruptions or unforeseeable events that result in call centres struggling to deal with the increased volume of calls. BT is working with Eckoh on the service, which is expected to be fully operational by the end of the year.

Sep: Through a partnership with ScanSoft, Cingular Wireless LLC began offering a handset specially designed for blind and vision-impaired people, with software that can convert information on the phone screen - including text messages - to synthesized speech.

Sep: Sakrament company, the leading developer in Russian speech synthesis and recognition, release Sakrament Personal Voice Master (PVM) 2.0.

Sep: Fujitsu Laboratories Ltd. and Fujitsu Frontech Limited announce their joint development of a service robot equipped with multiple microphones that enable detection of the direction of a sound source, and can understand and complete simple tasks that are instructed verbally. Utilizing the natural prosody speech synthesis method developed by Fujitsu Laboratories Ltd., the robot is capable of natural, human-like speech. Sales are scheduled to begin in Jun 2005.

Sep: Rob A. Rutenbar (Carnegie Mellon University) receives a $1 million grant from the National Science Foundation to develop a silicon chip to move automatic speech recognition from software into hardware in order to overcome the power limitations of cell phones.

Sep: Gartner reports that Nuance shipped more than 43% of all speech software ports in North America in 2003, and that ScanSoft had 41% of the global market share.

Sep: In a tactical bid to outmaneuver rivals, IBM announces that it will contribute some of its speech-recognition software to two open-source software groups.

Sep: "Speech technologies bridge the gap between computer language and human language", "Speech technology helps computers to figure out what people are thinking, and people to figure out what computers are thinking.", "Speech can be difficult and making it work with everything else makes it even more difficult", "The limitations on the rate of growth of speech technology may not be the person on the phone, it may be how easy it is to develop new applications." - Tim Berners-Lee at SpeechTEK 2004 in New York.

Sep: Speech Synthesis Markup Language 1.0 is published as a W3C Recommendation.

Sep: Bill Byrne leaves JHU to take up a lectureship at CUED.

Aug: ScanSoft announce that "certain transactions had been improperly accounted for at SpeechWorks" and would restate 2000, 2001, 2002 and the first six months of 2003.

Aug: Roger Moore leaves 20/20 Speech to take up a Chair of Computer Science as part of the Speech and Hearing (SpandH) research group at Sheffield University.

Jul: Frost & Sullivan's study - North American Telephony-based Speech Technologies and Solutions Market - acknowledged the contribution of Phonetic Systems to the speech recognition industry with the 2004 Speech Technology Differentiation Award.

Jul: The W3C Voice Browser Working Group releases Speech Synthesis Markup Language (SSML) Version 1.0 as a Proposed Recommendation.

Jul: A song climbing the country music charts - (I Wanna Hear) A Cheatin' Song - features a duet by Anita Cochran and Conway Twitty, who died a decade before the song was written. A computer was used to create Twitty's entirely new vocal out of snippets of his past recordings.

May: ScanSoft launches OpenSpeech Recognizer 3.0, marking the merging of code from the Philips SpeechPearl engine into the SpeechWorks OpenSpeech Recognizer product. Error rates have been reduced by an average of 25 percent, and the number of languages supported is up to 44.

Mar: "Within 10 years speech will be in every device ... Things like speech and [electronic] ink are so natural, when they get the right quality level they will be in everything" - Bill Gates, Gartner Symposium ITxpo 2004.

Mar: Microsoft launch Microsoft® Speech Server 2004. Standard Edition is $7,999 per processor, and Enterprise Edition is $17,999 per processor.

Mar: It is reported that the voice-recognition system for the new $40 adventure for the PlayStation 2 makes so many mistakes that games become an exercise in frustration.

Mar: The Universal Access capabilities of Mac OS X are enhanced to include a spoken interface for those with visual and learning disabilities.

Mar: IP2IPO announces a 43% investment in Phonologica Ltd, a spin-out from King's College London founded by Prof. Roy Pike and Dr. Barbara Forbes. Phonologica's software is anticipated to be computationally very efficient, noise tolerant and applicable to all languages, dialects and accents.

Mar: Melbourne-based Adacel Technologies announces that it will supply speech recognition software systems for the $US200bn global Joint Strike Fighter program.

Feb: Scansoft files a patent infringement and trade secret misappropriation lawsuit against Voice Signal Technologies, Inc. stating that they infringe a ScanSoft patent that covers voice-activated dialing for mobile telephones and has misappropriated ScanSoft confidential information and trade secrets in the field of speech recognition and related technologies.

Feb: "I am greatly concerned the greater use of automated voice recognition systems violates the spirit and intent of New Jersey's 1987 service quality standards. I find the use of this technology for directory assistance calls and for other operator-assisted calls to be a troubling development." - Richard Codey, New Jersey state Senate President.

Jan: Babel Technologies, Elan Speech and Babel Infovox merge to form the Acapela Group.

Jan: Aspect joins Microsoft's Speech Partner Program, endorses SALT and announces the first speech-enabled contact center solution based on Microsoft Speech Server.

Jan: ScanSoft Acquires LocusDialog.

Jan: NEC Corporation announces the development of a robot called PaPeRo (Partner-Type Personal Robot) that is capable of Japanese-English/English-Japanese translation of speech input through a microphone.

2003

Dec: John Oberteuffer retires as director and chief technology officer of Fonix.

Dec: Quentin Summerfield stands down as deputy director of the Medical Research Council's Institute of Hearing Research in Nottingham and takes up a chair in the Psychology Department at the University of York.

Dec: Domain Dynamics re-brands itself as Equivox.

Dec: At the IEEE ASRU workshop (US Virgin Islands) an Academic panel is asked "Is there anything fundamentally wrong with HMMs?" and an Industrial panel is asked "When will ASR companies be hiring again?".

Nov: At LangTech (Paris) Peter Ryan of Datamonitor says that telephony voice application revenues will grow at ~30% per annum over the next 4 years.

Nov: Microsoft releases 'Voice Command (version 1)' at Handago.com and PocketPC.com for $39.95, for PDA or cell phone users using the Windows Mobile 2003 software on their PocketPC.

Oct: At SpeechTek the ten most influential people in the speech technology industry over the previous year are announced: Bruce Balentine, executive vice president and chief scientist, Enterprise Integration Group; Steve Chambers, general manager, Network Speech Solutions, ScanSoft; Dr. Mike Cohen, co-founder, Nuance; Dr. James Larson, manager, Advanced Input/Output, Intel; Dr. Kai-Fu Lee, corporate vice president, Natural Interactive Services Division, Microsoft; Dr. Judith Markowitz, president, J. Markowitz and Associates; Dr. William Meisel, president, TMA Associates; Stuart Patterson, president, ScanSoft; Paul Ricci, chief executive officer, ScanSoft; Lynda Kate Smith, vice president and chief marketing officer, Nuance.

Oct: Speech Technology Magazine awards a Lifetime Achievement Award to Dr. Janet Baker, co-founder, president and later chairman & CEO of Dragon Systems (which grew to nearly $70 million in annual revenue and 380 employees).

Oct: Steve Renals (ex. Univ. Sheffield) is appointed Chair of Speech Technology at CSTR in Edinburgh.

Sep: The overall consensus at Eurospeech (Geneva) is that current approaches to ASR are running out of steam, speech companies are running out of money, and research people are migrating back to academia and large corporations.

Aug: Prof. Antonio Zampolli, one of the pioneers of Computational Linguistics, dies in Pisa in a terrible accident caused by fire.

Aug: An agreement is reached for the acquisition of Vocalis Limited by Netprofessions Limited, a subsidiary of Netdecisions Holdings Limited, for a consideration of £1.

Jul: Vocalis's shares are suspended by the London Stock Exchange.

Jul: Vocalis plunged 0.88p (36.8%) to 1.50p after the group reveals that it has just three months left before its cash runs out.

Jun: ScanSoft announces a partnership deal with Microsoft Assistive Technology Group, which works with technology vendors and developers to create products people with disabilities can use more easily. ScanSoft stock rose by 15% after the agreement was announced.

May: Aculab decide to stop working on speech technology.

Apr: Bishnu Atal is awarded the Benjamin Franklin Medal in Electrical Engineering by the Franklin Institute for his contributions to speech coding.

Apr: ScanSoft announces a set of agreements with IBM through which the companies will extend speech technology and applications across enterprise, desktop and multimodal environments. The relationship encompasses dictation applications (such as IBM's ViaVoice), telephony-based speech recognition technology and applications (such as IBM's WebSphere platform), and text-to-speech solutions (such as ScanSoft's RealSpeak).

Apr: SpeechWorks announces the availability of its SpeakFreely(TM) 2.0 natural language speech solution.

Apr: ScanSoft Inc. and SpeechWorks International Inc. announce that they will merge, with Scansoft to buy all the outstanding common stock of SpeechWorksin a deal valued at $115 million. Combined, the two companies employ about 900 people.

Apr: SpeechWorks introduce ETI-Eloquence SF(TM) embedded TTS engine that offers high quality voice output in thirteen languages with a memory footprint of <90KB per language.

Apr: According to Frost & Sullivan, the voice recognition market - worth $107m (£69m) in 2002 - will rise to $1.24bn in 2009.

Mar: Novauris demonstrates an ASR system capable of accessing any of a quarter of a billion items, equivalent to all addresses in the US.

Mar: Sony's SDR-4X II robot features a 20,000 word ASR unit.

2002

Dec: The EC releases the call for 6th Framework Information Society Technologies (IST) programme that is focused on 'Ambient Intelligence'.

Dec: 20/20 Speech makes 12 staff redundant, bringing the total staffing down to 28.

Dec: US private investment firm Carlyle Group, buys a 33.8% stake in the MoD and Research firm QinetiQ - valuing it at around £500 million.

Dec: Vocalis launch a share placement and open offer to raise £4.1m to stave off being put into administration.

Nov: Voice Signal Technologies announces that Dr. Victor Zue, a member of their Board of Directors, has been presented with Speech Technology Magazine's first-ever Lifetime Achievement Award for his pioneering work in the field of speech recognition and speech interface design.

Oct: Microsoft, AT&T and KTH announce the availability of the Entropic Speech Processing System (ESPS) libraries and waves+, software for analyzing speech signals through KTH's WaveSurfer tool for free.

Oct: ScanSoft and Philips announce that the companies have signed a definitive agreement whereby ScanSoft will acquire the Philips Speech Processing Telephony and Voice Control business units, and related intellectual property.

Sep: Daniel Jurafsky, an associate professor of linguistics and computer science at the University of Colorado in Boulder, wins a $500,000 "no-strings" grant from the John D. and Catherine T. MacArthur Foundation.

Sep: At ICSLP in Denver, ISCA announces that PC-ICSLP has agreed to an annual series of InterSpeech conferences.

Aug: LIPsync ceases operations.

Aug: Aaron Rosenberg retired from AT&T after a career lasting 45 years.

Aug: Nuance announces that it will reduce its worldwide workforce by approximately 23 percent, or 90 employees.

Jul: Nuance and SpeechWorks saw their stock reach an all-time low. Nuance, whose shares were once valued at $160 on Nasdaq, was listed at $3.88 a share. Similarly, Nasdaq-listed SpeechWorks plummeted in value from $100 a share to $4.

Jul: SpeechWorks announces that it is taking steps to achieve an approximate 20% reduction of its current expense base over the next four quarters.

Jun: The World Wide Web Consortium (W3C) issues the Speech Recognition Grammar Specification as a W3C Candidate Recommendation.

Jun: Market analysis firm Datamonitor expects the voice business market to return to positive growth during the second half of 2002 and grow quickly through 2007. The whole market (including platforms, enabling software, applications, and services) will be worth $4.33 billion.

May: The Kelsey Group has determined that SpeechWorks holds the leading share (33%) of the ASR market.

Apr: The Kelsey Group estimates worldwide revenues from core speech technologies - automated speech recognition (ASR), text-to-speech (TTS), natural language understanding (NLU), embedded speech and attendant infrastructure hardware and software - will grow from $505 million in 2001 to more than $2 billion in 2006.

Apr: In the industry's first published accuracy benchmark test (conducted by CT Labs), Nuance was recognized as offering speech recognition software that performed, on average, 30 percent more accurate than its closest competitor, SpeechWorks.

Apr: Melvyn Hunt announces that all his former colleagues at Phonetic Systems UK (and Dragon Systems UK R&D before that) have joined John Bridle and himself at Novauris, a new speech research company set up by Jim Baker.

Apr: Larry Rabiner joins Advanced Recognition Technologies (ART) Inc.

Apr: 20/20 Speech launches its low memory, text to speech software product, Aurix® tts.

Apr: VercomNet closes down due to lack of income and sells the Speech Technology Network (and details of 800 'experts') to ELSNET for 2500 Euros.

Mar: Victor Zue appointed as Director of Voice Signal Inc.

Feb: Larry Rabiner retires from AT&T Labs.

Jan: Herald Investment Trust injects £394,873 into Vocalis as part of a £4.1m rescue package designed to prevent the company from going into immediate administration. Vocalis shares, which reached a high of £10.57 1/2 in February 2000, fell 1/4p to 6 3/4p.

Jan: Bill Ainsworth (Professor of Speech Communication in the Department of Communication and Neuroscience at the University of Keele) dies unexpectedly of a heart attack.

Jan: Fonix Acquires DecTalk.

2001

Dec: Herman Steeneken retires from TNO in Holland.

Dec: U.S. bankruptcy judge approved the sale of Lernout & Hauspie's core speech recognition assets to ScanSoft Inc. following a last-minute contest over the fairness of the auction process. The ruling clears the way for a deal that is expected to bring about $51 million to creditors - $37.36 million in ScanSoft stock, $10 million in cash and a $3.5 million note. The judge dismissed SpeechWorks' challenge, although she labeled the bidding process "an extremely rough and tumble process."

Nov: SpeechWorks International, Inc. issues a press release challenging the results of the auction conducted by Lernout & Hauspie Speech Products NV for the sale of its speech and language technology assets.

Nov: ScanSoft Inc. announce that it has agreed to acquire the Speech and Language Technologies business of Lernout & Hauspie Speech Products N.V. and L&H Holdings USA, Inc., including substantially all of the operating and technology assets, in a bankruptcy auction.

Nov: John Bridle and Melvyn Hunt are declared redundant by Phonetic Systems without warning and with immediate effect.

Nov: Toshiba Research Europe Ltd (TREL) extends the research activities at its Cambridge Research Laboratory (CRL) to include speech recognition and synthesis.

Nov: The L&H groups are offered for sale together or separately, with the minimum bids at auction for each are: text-to-speech - $7M; L&H speech processing/dialog (and automotive) - $3M; Dragon speech processing/dialog - $6M; intelligent content management - $1.5M; Knexyx - $1.5M; ISI speech processing/dialog - $0.8M; audiomining - $0.75M; machine translation - $0.5M.

Oct: Lernout & Hauspie (once Europe's second largest software firm) is declared bankrupt after struggling for nearly a year to recover from the scandal that crippled the company.

Sep: The mother of Prof Pat Keating (UCLA) is killed in one of the hijacked planes that destroyed the World Trade Centre in New York and part of the Pentagon in Washington on 11th Sep.

Sep: At Eurospeech'2001 in Aalborg, Steve Greenberg states that "speech technology is a train crash waiting to happen"!

Aug: Phonetic Systems and Siemens form a partnership.

Aug: Visteon Corporation and SpeechWorks partner "to accelerate the power and appeal of speech applications in vehicles and other devices".

Jul: The Court of Appeals in Ghent set Jo Lernout and Pol Hauspie free on condition they did not communicate with each other, did not leave the country, and cooperated with investigators looking into fraud and other charges against them. The court also freed L&H partner Nico Willaert on the same conditions.

Jun: Charles Halle resigns as CEO of Vocalis as shares hit low of 10p (12 months after hitting a peak of ~£3.50).

Jun: Gaston Bastiaens is extradited to his native Belgium to face charges of fraud, insider trading, stock market manipulation and accounting law violations. The arrest warrant accuses Bastiaens of several acts of fraud, including forging documents.

May: Ex-L&H CEO Gaston Bastiaens is arrested by state troupers in his Boston garden.

May: Lernout & Hauspie Speech Products announces voluntary de-listing of stock from NASDAQ Europe.

May: Philips Speech Processing announces that its speech recognition technology is now able to push the limits of large vocabulary speech recognition beyond one million words in real-time without delay (for fully automated speech-driven directory assistance applications).

May: Joe Lernout and Pol Hauspie are jailed for one month on charges of fraud.

Apr: Dragon-UK announces that all staff have resigned from L&H. John Bridle, Melvyn Hunt and six others join Phonetic Systems Inc - a US/Israeli company using Entropic software for directory enquiries. Maha Kadirkamanathan and Bernard Payne set up shop in Bristol for Visteon (with their C-REC recogniser).

Apr: Speech Machines are bought out by MedQuist (part of Philips).

Mar: L&H announce the sale of their small vocabulary recogniser - C-REC (built by Dragon UK) - to Visteon for $13M.

Mar: Nuance announces that it is providing the speech recognition technology for the world's first completely voice-driven telephone for the wireless market.

Mar: The new L&H chief executive, Philippe Bodson, forces out the company's co-founder, Jo Lernout.

Feb: Autonomy announced that its iVoice speech recognition technology has shipped to its first customers. This is the first commercial implementation of Autonomy's iVoice technology, which was developed with the speech recognition software that Autonomy acquired from SoftSound Ltd. in 2000.

Jan: Nuance launched 'Vocalizer' - a line of advanced text-to-speech software products that bring a human-sounding voice to a wide range of applications such as e-mail reading, voice dialing, stock quotes, driving directions, order management and customer support.

Jan: 20/20 announces the first live broadcast by the BBC using 'Aurix ST' automatic subtitling technology.

Jan: Rhetorical Group plc, the Edinburgh based speech synthesis company, announces the completion of a £2 million fund raising through a private placing by Bell Lawrie with institutional investors and venture capital trusts. At the placing price, Rhetorical is valued at £14 million.

Jan: Pol Hauspie (founder and former co-chairman of troubled speech technology company Lernout & Hauspie) is being investigated for possible money laundering. 800 jobs are cut (20% reduction in workforce). John Duerden (ex-head of Dictaphone) resigns as chief executive and member of the board of directors of L&H.

2000

Dec: SpeechWorks acquire Eloquent Technology, Inc., a supplier of state-of-the-art speech synthesis or text-to-speech (TTS) technology, in a stock and cash transaction valued at $5.25 million in cash and approximately 300,000 shares of SpeechWorks common stock.

Dec: L&H in talks to sell parts of its business.

Nov: 20/20, BBC and Softel win a Royal Television Society Technical Innovation Awards in the Innovative Applications category.